Let's explore how Precision, Recall, and F1 Score can give a realistic view of a model’s predictive power.

You'll also gain practical skills to generate these metrics using Scikit-Learn.

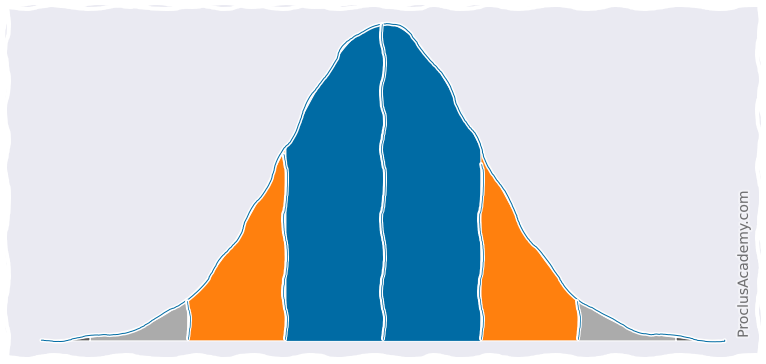

You'll also learn about Empirical Rule, which dictates how values are spread in specific intervals around the mean.

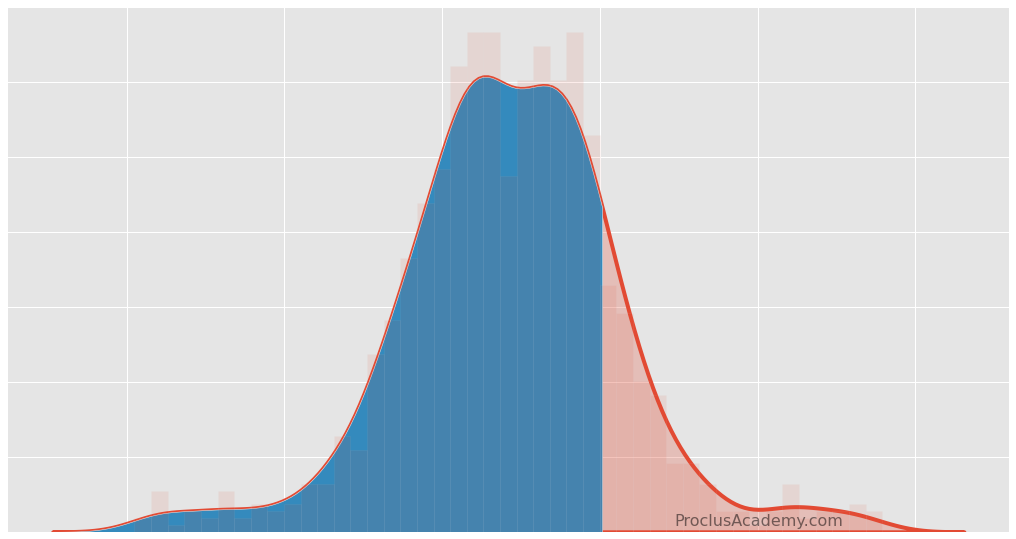

You'll also learn to plot and analyze partial areas under the curve using Matplotlib, Seaborn, and Numpy.

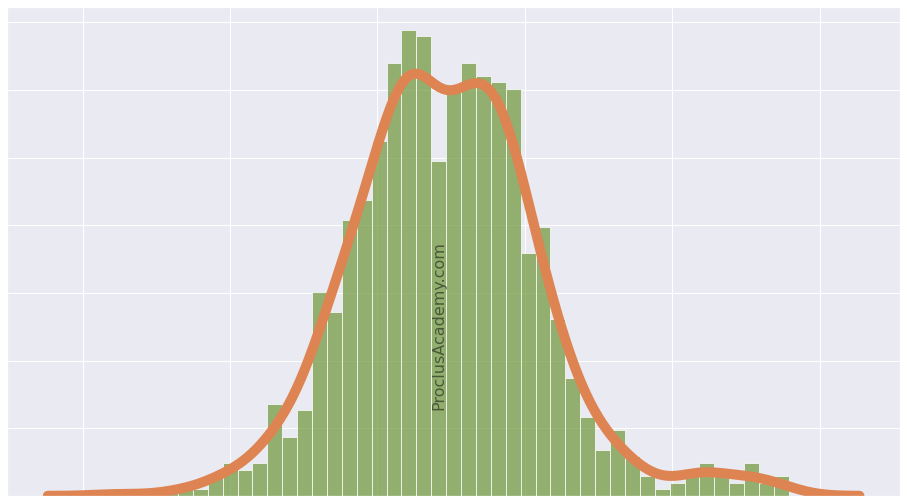

You'll also learn to visualize distribution as Histogram and Density Curve using Matplotlib and Seaborn.

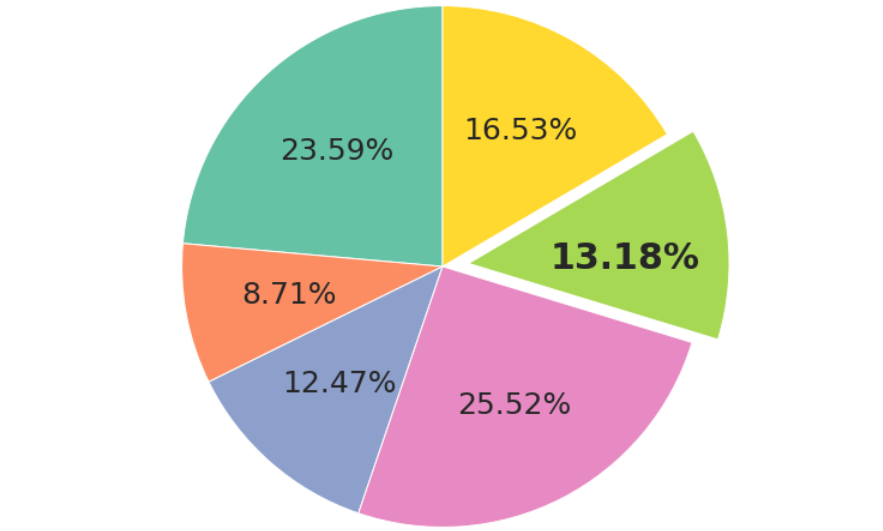

Along the way, you'll see what's an exploding pie chart and how to draw it. Finally, you'll learn to plot Donut Charts!

You'll also develop practical skills and learn how to do sampling using Python and Pandas.

You can even produce datasets that are harder to classify!

I'll also show you two different ways to visualize the Confusion Matrix.

You'll also gain practical skills to generate and visualize these metrics using Scikit-Learn and Seaborn.